|

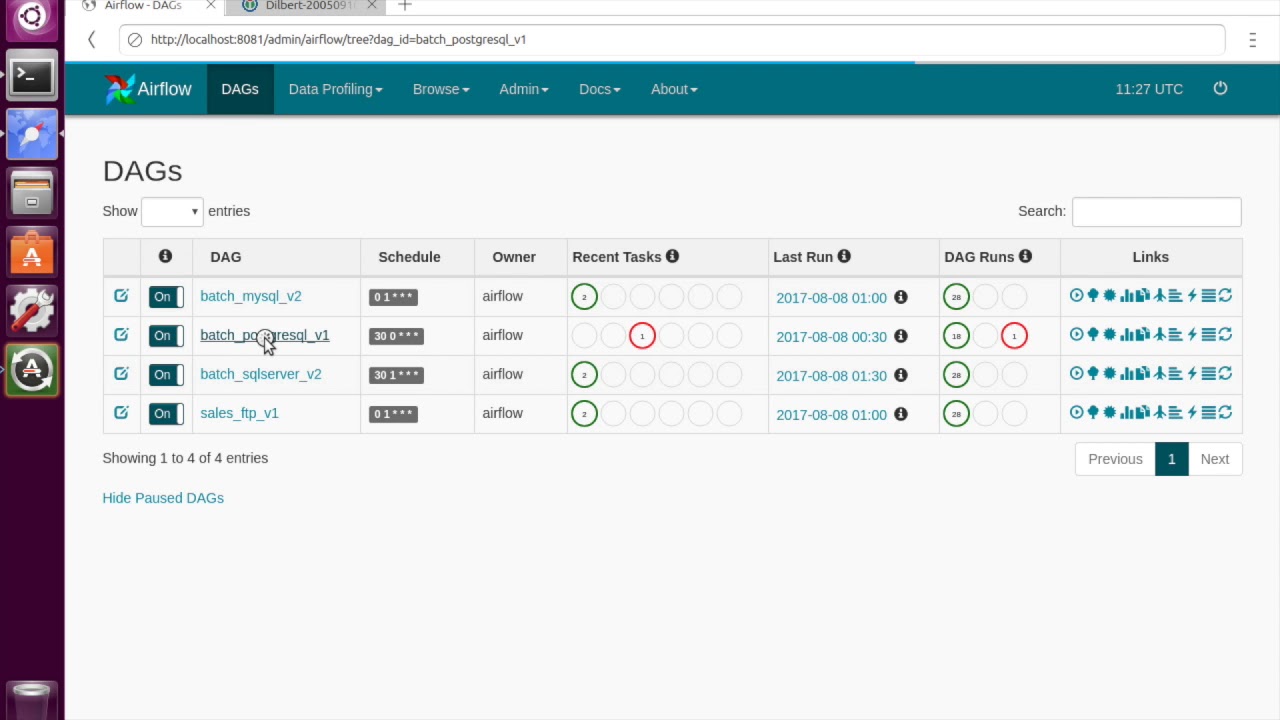

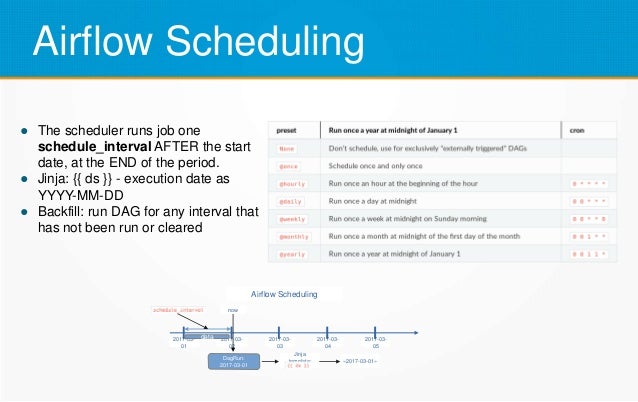

11/18/2023 0 Comments Apache airflow documentationThis guide shows you how to write an Apache Airflow directed acyclic graph (DAG) that runs in a Cloud Composer environment. You can dynamically generate DAGs when using the decorator or the with DAG(.) context manager and Airflow will automatically register them.You can dynamically generate DAGs when using the decorator or the with DAG(.) context manager and Airflow will automatically register them. However, writing DAGs that are efficient, secure, and scalable requires some Airflow-specific finesse. Because Airflow is 100% code, knowing the basics of Python is all it takes to get started writing DAGs. DAG writing best practices in Apache Airflow | Astronomer Documentation. Certain tasks have the property of depending on their own past, meaning that they can't run. For each schedule, (say daily or hourly), the DAG needs to run each individual tasks as their dependencies are met. A dag also has a schedule, a start date and an end date (optional). The status of the DAG Run depends on the tasks states.class DAG (LoggingMixin): """ A dag (directed acyclic graph) is a collection of tasks with directional dependencies. Any time the DAG is executed, a DAG Run is created and all tasks inside it are executed. A DAG Run is an object representing an instantiation of the DAG in time. The mathematical properties of DAGs make them useful for building data pipelines: DAG Runs - Airflow Documentation. Each DAG represents a collection of tasks you want to run and is organized to show relationships between tasks in the Airflow UI. The status of the DAG Run depends on the tasks states.In Airflow, a directed acyclic graph (DAG) is a data pipeline defined in Python code. However, writing DAGs that are efficient, secure, and scalable requires some Airflow-specific finesse.DAG Runs - Airflow Documentation.

A DAG specifies the dependencies between Tasks, and the order in which to execute them and run retries the. A workflow is represented as a DAG (a Directed Acyclic Graph), and contains individual pieces of work called Tasks, arranged with dependencies and data flows taken into account. This will make the context available as a dictionary in your task.Airflow is a platform that lets you build and run workflows. To access the Airflow context in a decorated task or PythonOperator task, you need to add a **context argument to your task function. Retrieve the Airflow context using the decorator or PythonOperator You cannot access the Airflow context dictionary outside of an Airflow task. execute method of any traditional or custom operator.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed